Artificial intelligence has evolved from science fiction to an essential business tool, transforming industries from healthcare to finance. At the core of every AI system lies the AI model, a sophisticated program that can learn from data, recognize patterns, and make decisions with minimal human intervention. Understanding AI models is crucial for navigating today’s technology landscape and making informed decisions about AI adoption in your organization.

What Is an AI Model

An AI model is a computer program trained on data that learns to perform tasks or make predictions without explicit programming for each specific scenario. Unlike traditional software that follows predetermined instructions, AI models analyze data patterns to mimic human expertise in specific areas, adapting their responses based on what they’ve learned from training data.

The distinction between AI models and traditional algorithms lies in their adaptive capabilities and learning mechanisms. While conventional computer programs follow predetermined instructions in a linear fashion, AI models possess the remarkable ability to modify their behavior based on experience and exposure to new data. This characteristic enables them to improve their performance over time, making them particularly valuable for tasks that involve uncertainty, complexity, or situations where explicit programming would be impractical or impossible.

Modern AI models operate on the principle of pattern recognition and statistical inference, utilizing mathematical frameworks to identify relationships within data that may not be immediately apparent to human observers. These models can process vast amounts of information simultaneously, extracting meaningful insights and generating predictions or classifications based on learned representations of the training data. The sophistication of contemporary AI models allows them to handle multiple types of data inputs, including text, images, audio, and numerical data, often processing these different modalities simultaneously to create more comprehensive and accurate outputs.

The evolution of AI models has been marked by significant advances in both computational power and algorithmic sophistication. Early AI systems, such as the checkers and chess-playing programs developed in the 1950s, demonstrated the foundational concept of autonomous decision-making in response to dynamic inputs rather than following pre-scripted sequences. These pioneering models established the fundamental principle that AI systems should be capable of responding intelligently to changing conditions and opponent strategies, laying the groundwork for the more sophisticated models we see today.

Contemporary AI models are characterized by their ability to generalize from training data to make accurate predictions about new, unseen data points. This generalization capability is achieved through various mathematical and statistical techniques that enable models to capture underlying patterns and relationships within datasets. The process involves training algorithms on large volumes of data, allowing the models to learn representations that can be applied to novel situations with reasonable accuracy and reliability.

How Do AI Models Work

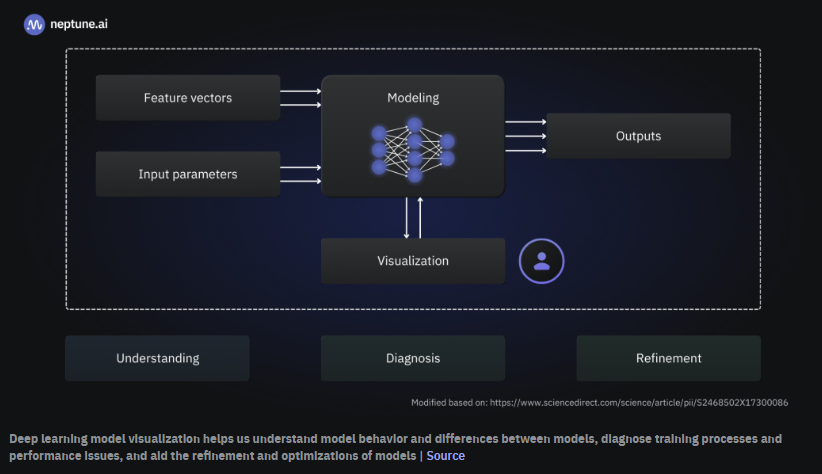

AI models operate through a systematic process that transforms raw data into actionable insights or decisions. Understanding this process helps demystify how these sophisticated systems generate their outputs.

Training: The foundation of AI model functionality begins with feeding large amounts of data to the model so it can adjust its internal parameters. During this phase, the model learns from examples, gradually improving its ability to recognize patterns and make accurate predictions. This process is similar to how humans learn from experience, but AI models can process vastly larger amounts of information simultaneously.

Pattern recognition: Once exposed to training data, the model identifies trends and relationships within the information to make informed decisions. The model looks for statistical regularities, correlations, and other patterns that help it understand the underlying structure of the data. This pattern recognition capability enables models to generalize from their training experience to new, unseen situations.

Inference: After training is complete, the model applies what it has learned to make predictions or perform tasks on new, previously unseen data. This phase demonstrates the model’s practical value, as it can now process real-world inputs and generate useful outputs based on its learned understanding of patterns and relationships.

Algorithm role: Throughout this process, algorithms serve as the decision-making rules that guide how the model learns and makes choices. Different algorithms excel at different types of tasks, and selecting the appropriate algorithm is crucial for achieving optimal performance in specific applications. Common optimization techniques like gradient descent systematically modify model parameters based on performance feedback, gradually improving accuracy.

What Are the Different Types of AI Models

AI models come in various types, each designed to address specific learning scenarios and problem types. Understanding these categories helps organizations select the most appropriate approach for their particular needs and constraints.

Supervised Learning Models

Supervised learning models learn from labeled data, where each input comes with a known correct output. This approach is similar to learning with a teacher who provides the right answers during the learning process.

- Learn from labeled data, meaning each input comes with a known output (like images tagged with names).

- Commonly used for classification (e.g., spam detection) and prediction tasks.

These models excel when historical data with known outcomes is available, such as email spam detection or medical diagnosis. The supervised approach enables high accuracy when sufficient quality labeled data is available, making it popular for business applications. Common supervised learning algorithms include linear regression for predicting continuous values, logistic regression for binary classification tasks, decision trees for interpretable rule-based decisions, and support vector machines for complex classification problems.

Unsupervised Learning Models

Unsupervised learning models discover patterns in data without access to labeled examples or correct answers. These models must find structure and meaning within the data independently.

- Find patterns in unlabeled data where no explicit answers are available.

- Useful for grouping similar data points, such as customer segmentation or anomaly detection.

They excel at finding hidden patterns in unlabeled data, such as identifying customer segments based on purchasing behavior or detecting unusual patterns that might indicate fraud. Clustering algorithms like k-means, hierarchical clustering, and DBSCAN are common examples of unsupervised learning techniques that group similar data points together based on various distance or similarity measures.

Reinforcement Learning Models

Reinforcement learning represents a distinct paradigm where models learn through interaction with an environment, receiving feedback in the form of rewards or penalties based on their actions.

- Learn by receiving feedback based on actions taken, improving through trial and error.

- Often applied in robotics, gaming, and autonomous systems.

This approach mimics the way humans and animals learn through trial and error, continuously refining strategies to maximize positive outcomes while minimizing negative consequences. The reinforcement learning framework involves an agent that takes actions within an environment, receives rewards or penalties based on those actions, and uses this feedback to improve future decision-making.

Deep Learning Models

Deep learning models utilize layered neural networks that mimic aspects of human brain structure to analyze complex data types like images, speech, and natural language.

- Utilize layered neural networks that mimic the human brain to analyze complex data like images and speech.

- Excel in advanced tasks such as voice assistants and image recognition.

Multiple processing layers enable these models to automatically learn hierarchical feature representations from raw data, eliminating the need for manual feature engineering that was traditionally required in machine learning applications. Convolutional neural networks excel at image processing tasks by learning spatial hierarchies of features, while recurrent neural networks are designed to handle sequential data such as text or time series information.

Foundation Models and Large Language Models

Foundation models represent a paradigm shift in AI development, moving away from task-specific models toward large, versatile systems that can be adapted for multiple applications.

- Trained on vast amounts of diverse data to understand and generate human language effectively.

- Power applications like chatbots, machine translators, and content creation tools.

These models are typically trained on internet-scale datasets containing diverse types of information, enabling them to develop broad knowledge representations that can be applied to various tasks. Large language models represent one of the most prominent examples of foundation models, demonstrating remarkable capabilities in text generation, comprehension, and reasoning. Companies like OpenAI and Anthropic have developed sophisticated foundation models that can be fine-tuned for specific business applications.

How Are AI Models Trained and Developed

The process of creating effective AI models involves several critical phases, each requiring careful attention to ensure the final system performs reliably in real-world applications.

Data Collection and Preparation

The foundation of any successful AI model lies in gathering high-quality, relevant datasets essential for accurate learning.

- Gather quality, relevant datasets essential for accurate learning.

- Clean and format data by removing errors and standardizing inputs to improve training effectiveness.

Data collection involves identifying and acquiring information that represents the problem domain comprehensively, ensuring coverage of different scenarios the model will encounter. Data preparation involves cleaning, formatting, and organizing information to ensure it can be effectively used by learning algorithms, often requiring domain expertise and significant manual effort. Data quality directly impacts model performance, making this phase crucial for achieving reliable results.

Training Algorithms and Optimization

Training involves algorithms that iteratively adjust the model’s parameters to minimize prediction errors and improve performance.

- Algorithms adjust the model’s parameters iteratively to minimize prediction errors (introduce gradient descent simply as a common method for this).

The training process typically uses optimization techniques such as gradient descent to minimize loss functions that measure the difference between model predictions and desired outputs. The training process is often computationally intensive, particularly for large models, requiring specialized hardware and careful management of computational resources. Popular frameworks like TensorFlow and PyTorch facilitate model training with optimized algorithms and tools.

Model Parameters and Hyperparameters

Understanding the distinction between parameters and hyperparameters is crucial for effective model development.

- Parameters are the features the model learns from data, while hyperparameters are the settings chosen before training, such as learning rate or number of layers.

Model parameters represent the internal configuration variables that determine how an AI system processes data and generates outputs. These parameters are learned during the training process through optimization algorithms that adjust their values to minimize prediction errors or maximize performance on training tasks. Hyperparameters are external configuration settings that must be chosen before training begins, including learning rates that control how quickly models adapt to new information, batch sizes that determine how much data is processed simultaneously, and architectural choices such as the number of layers in a neural network.

Validation and Performance Evaluation

Testing model accuracy using data the model hasn’t seen before ensures it can generalize well to new situations.

- Test the model’s accuracy using new data it hasn’t seen before to ensure it generalizes well.

- Emphasize the importance of avoiding overfitting, where the model learns too specifically from training data and performs poorly on new data.

The validation process uses data that was not included in training to evaluate model performance and make adjustments to hyperparameters or training procedures. Testing provides a final assessment of model capabilities using completely separate datasets that simulate real-world deployment conditions. The iterative nature of AI model development requires continuous refinement and optimization based on performance feedback.

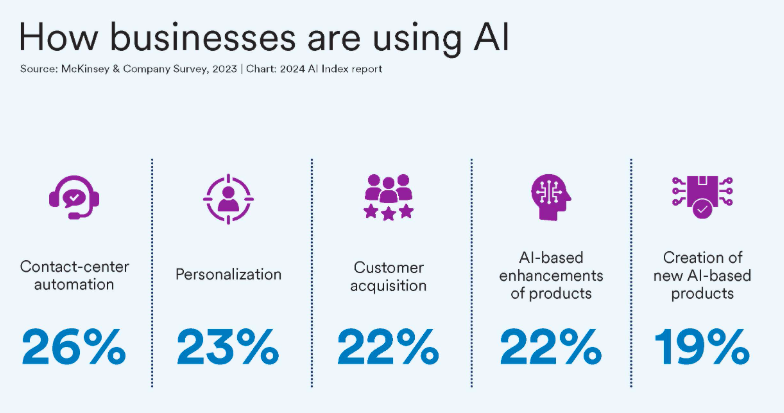

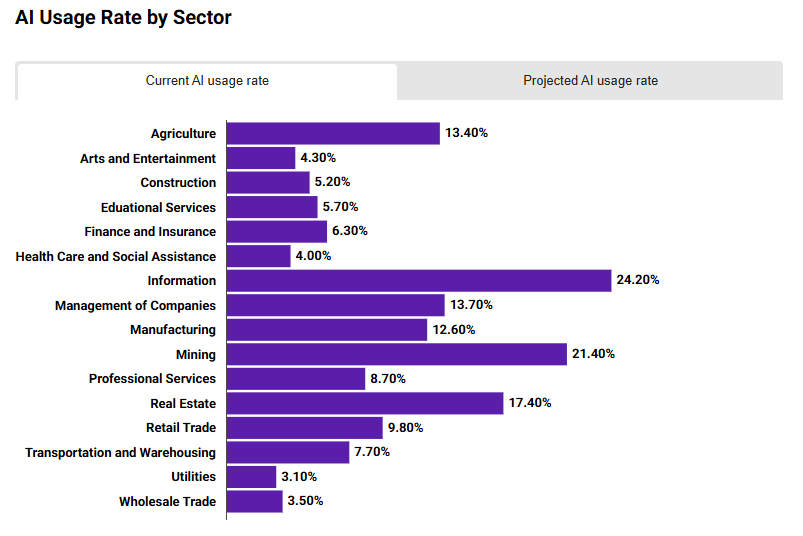

What Are the Main Applications of AI Models in Business

AI models have found practical applications across virtually every industry, demonstrating their versatility and value in solving real-world business challenges.

Healthcare

AI models are transforming healthcare through improved diagnostic accuracy and personalized treatment approaches.

- Diagnosing diseases through medical imaging analysis and recommending treatments tailored to patient data.

Medical imaging analysis enables AI models to detect patterns in X-rays, MRIs, and other scans that might indicate diseases like cancer, often achieving accuracy comparable to human specialists. Treatment recommendation systems analyze patient data, including medical history and genetic information, to suggest personalized therapy options that maximize effectiveness while minimize side effects. Drug discovery applications use AI to identify promising compounds and optimize clinical trial designs, potentially reducing development time and costs.

Finance

The financial sector leverages AI models for risk management, fraud prevention, and customer service enhancement.

- Detecting fraudulent transactions by spotting unusual patterns and assessing credit risk for loan approvals.

Fraud detection systems analyze transaction patterns in real-time to identify suspicious activity, protecting both institutions and customers from financial crimes. Credit scoring models evaluate loan applications by analyzing multiple data sources to assess default risk more accurately than traditional methods. Algorithmic trading systems use AI to analyze market data and execute trades at speeds impossible for human traders, optimizing investment strategies.

Transportation and Logistics

AI models optimize movement of people and goods through intelligent route planning and autonomous systems.

- Optimizing routes to reduce delivery times and improve supply chain efficiency.

Route optimization analyzes real-time traffic data, weather conditions, and historical patterns to minimize delivery times and fuel consumption. Supply chain management uses predictive models to optimize inventory levels, reduce waste, and improve overall efficiency. Autonomous vehicle systems integrate multiple AI models to perceive the environment, plan routes, and control vehicle movement safely.

Retail and Consumer Technology

Retail applications focus on personalizing customer experiences and optimizing business operations.

- Personalizing shopping experiences with recommendation engines and managing inventory effectively.

Recommendation engines analyze customer behavior and preferences to suggest products, increasing sales and customer satisfaction. Inventory management systems predict demand patterns to optimize stock levels, reducing both stockouts and excess inventory costs. Dynamic pricing models adjust prices in real-time based on demand, competition, and other market factors.

Social Media and Content Moderation

Large-scale content platforms rely on AI models to manage user-generated content and enhance user experiences.

- Automatically flagging inappropriate content and enhancing user engagement through personalized feeds.

Content moderation systems automatically identify and filter inappropriate or harmful content, enabling platforms to maintain community standards at scale. Personalized feed algorithms curate content based on user interests and engagement patterns, improving user satisfaction and platform engagement. Automated translation and accessibility features make platforms more inclusive and globally accessible.

What Are the Risks and Ethical Considerations in AI Models

While AI models offer significant benefits, their deployment raises important concerns that organizations must address to ensure responsible and effective use.

AI Bias and Fairness

AI models can perpetuate or amplify existing societal biases, leading to unfair or discriminatory outcomes.

- Models can inherit biases present in training data, leading to unfair or discriminatory outcomes.

- Implement mitigation strategies to detect and reduce bias.

The NIST AI Risk Management Framework identifies three primary categories of bias in AI systems: systemic bias that reflects broader social and institutional inequalities, statistical bias that arises from data collection or sampling issues, and human bias introduced through cognitive limitations or prejudices of individuals involved in system development. Organizations working with AI ethics consulting can develop comprehensive strategies to identify and mitigate these biases throughout the development lifecycle.

Transparency and Explainability

Many AI models operate as “black boxes” where decision-making processes are not easily interpretable by humans.

- Ensure stakeholders understand how decisions are made by AI systems for accountability and trust.

Explainability challenges are particularly acute for complex models like deep neural networks, where decisions emerge from millions of parameter interactions. Trust and accountability require stakeholders to understand how AI systems reach their conclusions, especially in high-stakes applications like healthcare or criminal justice. Developing interpretable models or explanation techniques helps build confidence in AI systems and enables proper oversight.

Security and Privacy

AI models often process sensitive data, requiring robust protection measures to prevent misuse or unauthorized access.

- Protect sensitive data through encryption, access controls, and regulatory compliance to prevent misuse.

Data protection involves implementing encryption, access controls, and secure data handling practices throughout the model lifecycle. Privacy-preserving techniques like differential privacy and federated learning enable model training while protecting individual privacy. Organizations must comply with relevant regulations such as GDPR or CCPA while still enabling effective model development and deployment.

Regulatory Compliance and Governance

The evolving regulatory landscape requires organizations to align AI development with legal requirements and ethical standards.

- Align AI development with laws and ethical standards to maintain social responsibility.

AI governance consulting helps organizations establish clear responsibility for AI system oversight, including regular audits and performance reviews. Risk management processes must identify potential negative impacts and establish mitigation strategies before deployment. Organizations should stay informed about emerging regulations like the EU AI Act and industry best practices for responsible AI development.

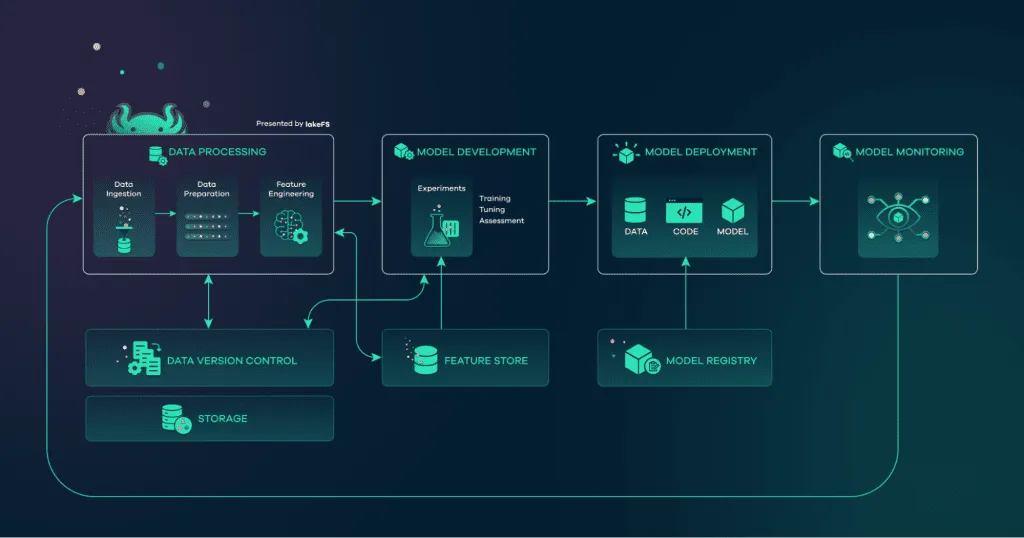

How Are AI Models Deployed and Monitored in Production

Successfully transitioning AI models from development to production requires careful planning, robust infrastructure, and ongoing monitoring to ensure reliable performance.

Deployment Strategies and Infrastructure

Effective deployment requires choosing appropriate infrastructure and integration approaches based on specific requirements.

- Integrate AI models with existing IT systems, choosing between cloud-based or on-premises solutions.

Cloud-based solutions offer scalability and managed services but require consideration of data security and latency requirements. On-premises deployment provides greater control and security but requires internal expertise and infrastructure investment. Edge deployment brings AI capabilities closer to data sources, reducing latency but limiting computational resources. Organizations can benefit from AI workflow automation services to streamline integration with existing business systems.

Monitoring Data Drift and Model Drift

Production AI models face unique challenges as real-world conditions change over time.

- Detect changes in incoming data or model performance over time that could degrade accuracy.

Data drift occurs when input data characteristics change compared to training data, potentially degrading model performance even when the underlying relationships remain stable. Model drift happens when the relationships between inputs and outputs change, making previously learned patterns less accurate or relevant. Continuous monitoring systems track these changes and alert operators when performance degradation is detected.

Performance Metrics and Alerting

Ongoing assessment ensures models continue to meet performance requirements and business objectives.

- Use key indicators like accuracy, precision, and recall to ensure the model continues to perform reliably.

Key performance indicators include accuracy metrics specific to the task, computational resource utilization, response times, and system availability. Automated alerting systems notify operators when metrics exceed acceptable thresholds, enabling rapid response to issues. Regular performance reviews help identify trends and opportunities for improvement.

Model Versioning and Continuous Improvement

Managing model updates and improvements requires systematic approaches to maintain reliability and enable rollback if needed.

- Update and retrain models as needed to maintain relevance and effectiveness in changing environments.

Version control systems track changes to models, training data, and deployment configurations, enabling reproducibility and rollback capabilities. A/B testing allows organizations to validate new models with real users while limiting potential negative impacts. Continuous improvement processes incorporate feedback from production performance to guide future development efforts.

What Are the Emerging Trends in AI Modeling

The AI landscape continues evolving rapidly, with several key trends shaping the future of model development and deployment.

Growing Scale and Foundation Models

The development of increasingly large models trained on diverse datasets continues to drive advances in AI capabilities.

- Development of increasingly large models trained on diverse datasets to improve understanding and generation capabilities.

Scale benefits demonstrate that larger models often achieve better performance across multiple tasks, though with diminishing returns and increasing costs. The foundation model approach enables organizations to build applications on top of pre-trained models rather than developing specialized systems from scratch. Resource requirements for training the largest models create barriers to entry that favor well-funded organizations and highlight the value of services like custom LLM creation.

Multimodal AI

Models capable of processing and integrating multiple data types simultaneously represent a significant advancement in AI capabilities.

- Models capable of processing and integrating multiple data types simultaneously, such as combining text and images.

Cross-modal understanding enables models to relate information across different types of inputs, such as connecting images with text descriptions. Unified interfaces allow users to interact with AI systems using whatever input modality is most convenient or appropriate. Applications include more natural human-computer interaction, improved accessibility features, and richer content generation capabilities.

Efficient Architectures and Edge AI

Development of smaller, optimized models enables AI deployment on resource-constrained devices and reduces environmental impact.

- Smaller, optimized models that run on local devices, enabling fast and privacy-preserving AI applications.

Model compression techniques maintain performance while reducing computational requirements, making AI more accessible and cost-effective. Edge deployment brings AI capabilities to mobile devices, embedded systems, and remote locations with limited connectivity. Energy efficiency considerations become increasingly important as AI adoption scales globally.

Democratization and AI Accessibility

Tools and platforms that simplify AI development are making advanced capabilities available to a broader range of organizations and individuals.

- Tools and platforms that allow businesses and individuals with limited expertise to build and deploy AI solutions.

Low-code and no-code platforms enable domain experts to build AI applications without extensive programming knowledge. Pre-trained models and APIs provide access to sophisticated AI capabilities without requiring internal model development. Educational resources and community support lower barriers to AI adoption across different industries and organization sizes.

How Organizations Can Maximize Value With AI Models

Successfully leveraging AI models requires strategic planning, proper implementation, and ongoing commitment to best practices that ensure long-term success.

Developing custom AI solutions tailored to unique business needs often provides greater value than generic approaches. Organizations should assess their specific challenges, data assets, and objectives to identify where AI can create the most significant impact. This assessment should consider both technical feasibility and business value, focusing on applications where AI capabilities align with strategic priorities.

Prioritizing ethical, secure, and scalable AI deployment practices establishes a foundation for sustainable AI adoption. This includes implementing governance frameworks that ensure responsible development, robust security measures that protect sensitive data, and architectural approaches that can scale with growing demands. Organizations should also establish clear policies for data usage, model development, and system monitoring.

Investing in continuous optimization and training programs maintains long-term success as both technology and business requirements evolve. This involves staying current with advances in AI techniques, regularly updating models based on new data and changing conditions, and developing internal expertise through training and education programs. Organizations should also establish processes for measuring and improving AI system performance over time.

Partnering with AI consulting experts ensures seamless integration, governance, and ongoing support throughout the AI adoption journey. Experienced consultants bring deep expertise in both technical implementation and change management, helping organizations avoid common pitfalls while maximizing the value of their AI investments. This partnership approach enables organizations to focus on their core business while leveraging specialized expertise for AI strategy and implementation.

FAQs About AI Models

How long does it take to train an AI model?

Training time varies significantly based on model complexity, data size, and computational resources available. Simple models might train in minutes or hours, while complex deep learning models or foundation models can require days, weeks, or even months. The specific timeline depends on factors such as the amount of training data, model architecture, available hardware, and desired performance levels.

What skills are necessary to develop AI models?

Developing AI models typically requires knowledge in programming languages like Python or R, understanding of statistical concepts and machine learning algorithms, and familiarity with data preprocessing and analysis techniques. Domain expertise in the application area is also valuable for proper problem formulation and result interpretation. However, modern tools and platforms are making AI development more accessible to professionals with varying technical backgrounds.

How do AI models ensure security and privacy?

AI models protect security and privacy through multiple layers of safeguards, including data encryption during storage and transmission, access controls that limit who can view or modify sensitive information, and compliance with relevant data protection regulations like GDPR or CCPA. Additional techniques include differential privacy for training on sensitive data, federated learning to keep data distributed, and regular security audits to identify and address vulnerabilities.

Can AI models be customized for different industries?

AI models can be extensively customized for different industries by training on domain-specific data, incorporating relevant business rules and constraints, and optimizing for industry-specific metrics and requirements. For example, healthcare models focus on patient safety and regulatory compliance, while financial models prioritize risk management and fraud detection. This customization often involves fine-tuning pre-trained models or developing entirely new architectures for specific applications.

What ongoing support do AI models need after deployment?

AI models require continuous monitoring to track performance metrics and detect issues like data drift or model degradation. Regular updates may be needed to incorporate new data, adjust to changing conditions, or improve performance. This includes retraining models periodically, updating software dependencies, monitoring for security vulnerabilities, and maintaining infrastructure. Organizations should also plan for scaling resources as usage grows and evolving models as business requirements change.