The artificial intelligence revolution has fundamentally transformed how organizations approach technology infrastructure. As businesses increasingly rely on AI capabilities to drive innovation, improve efficiency, and maintain competitive advantage, the need for comprehensive AI infrastructure has become critical. Understanding what constitutes an AI stack and how to implement one effectively can mean the difference between successful AI adoption and costly failed initiatives.

What is an AI Stack

An AI stack is a collection of technologies, frameworks and infrastructure components that work together to build, train, deploy, and manage artificial intelligence systems. Think of it like the foundation of a house – you can’t just throw random materials together and expect it to work. Every piece connects to create something that functions as a whole.

AI Stack Definition and Core Purpose

At its core, an AI infrastructure serves as the technological foundation that lets organizations develop and deploy artificial intelligence solutions at scale. Unlike traditional software that handles things like email or websites, AI technology stacks address unique challenges that come with machine learning.

The main purpose is simple: create an environment where different AI components work together seamlessly. This means data scientists can focus on solving problems instead of wrestling with technical setup issues.

How AI Stacks Differ from Traditional Technology Stacks

Regular technology stacks handle everyday computing tasks like processing transactions or serving web pages. Machine learning stacks work differently because AI has special requirements that regular computers weren’t designed for.

The key differences include:

- Specialized hardware – AI uses Graphics Processing Units (GPUs) and Tensor Processing Units (TPUs) that excel at doing many calculations at once

- Massive data processing – AI applications work with datasets that can be thousands of times larger than typical business databases

- Model management – AI systems include training pipelines, version control for models, and automated deployment processes that don’t exist in regular software

Why Modern Organizations Need AI Infrastructure

Organizations that try to implement AI without proper artificial intelligence infrastructure often run into serious problems. These include systems that work inconsistently, security gaps, and inability to handle more users or data over time.

Enterprise AI solutions provide the structure and standardization needed for complex AI projects. More importantly, comprehensive AI stacks support the complete AI lifecycle from initial data exploration through production deployment and ongoing optimization.

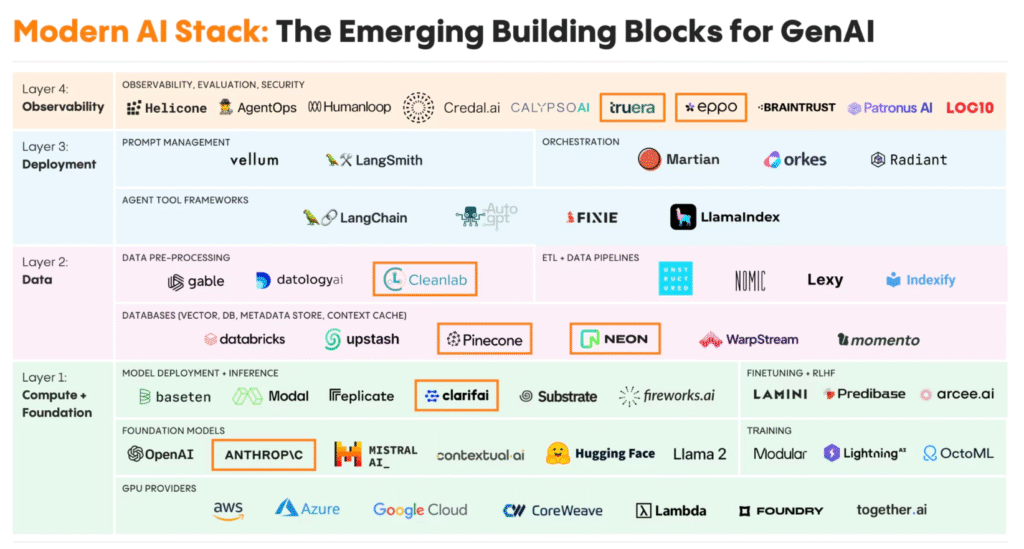

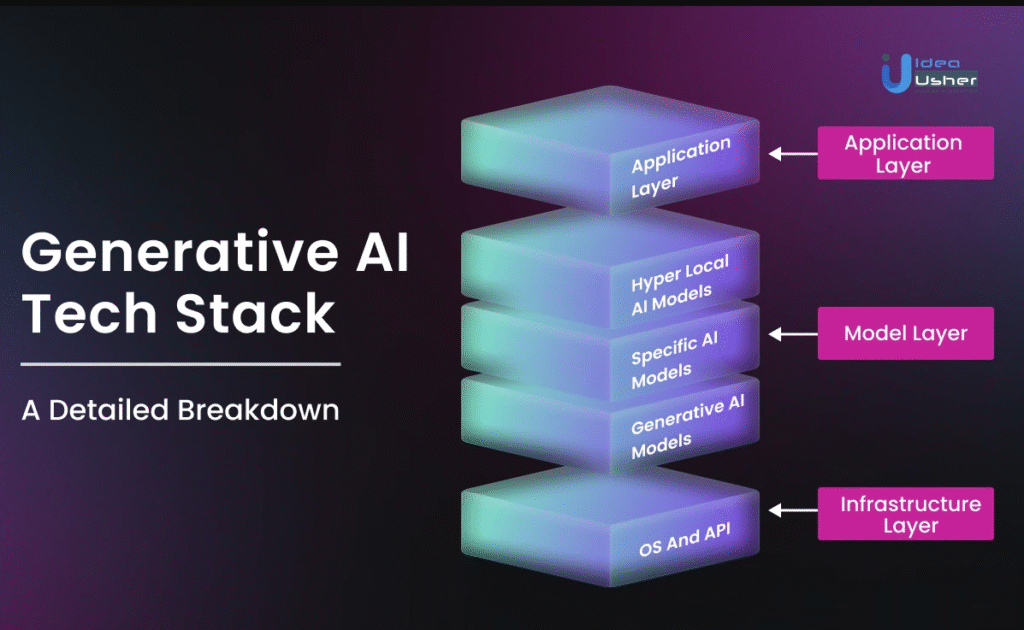

Core Components of an AI Stack Architecture

Modern AI platform architecture organizes into four main layers. Each layer handles specific tasks while working with the others to create a complete system.

Infrastructure Layer

The infrastructure layer provides the foundation – the computational power, storage systems, and networking that AI operations require. This includes specialized hardware like AI accelerators and high-performance storage systems designed for the massive data requirements typical of AI workloads.

This layer supports both development activities (like training models) and production operations (like making predictions in real-time). The infrastructure balances performance with cost while providing the ability to scale up as AI workloads grow.

Data Layer

The data layer manages the information that machine learning models use for training and making predictions. This layer handles complex tasks including bringing data in from multiple sources, cleaning and validating data quality, and transforming raw data into formats that AI algorithms can use.

Modern data layers include specialized technologies like vector databases. These databases are optimized for similarity searches and storing embeddings, which have become important for applications involving natural language processing and systems that retrieve relevant information to augment AI responses.

Model Layer

The model layer contains the actual AI algorithms and machine learning processes that turn data into insights. This includes comprehensive AI frameworks like TensorFlow and PyTorch, model lifecycle management tools, and specialized platforms for different AI domains like computer vision and natural language processing.

Effective model layers include version control systems, experiment tracking tools, automated training pipelines, and performance monitoring systems. These ensure models maintain accuracy and effectiveness over time.

Application Layer

The application layer connects AI capabilities with end users through interfaces and business applications. This layer includes user interface frameworks, API management systems, and integration platforms that embed AI capabilities into existing business processes.

The application layer addresses user experience considerations like response time optimization and intuitive interfaces that let non-technical users benefit from sophisticated AI capabilities.

AI Stack Hardware Requirements

AI workloads require specialized processing that regular computers weren’t designed to handle. The computational demands are fundamentally different from traditional business software.

Graphics Processing Units for AI Workloads

Graphics Processing Units have become the dominant platform for AI applications because of their architecture. GPUs contain thousands of processing cores that can perform calculations simultaneously, making them perfect for the matrix operations that dominate machine learning computations.

Enterprise AI implementations often use GPU clusters with multiple high-performance graphics processors. This setup enables organizations to train complex neural networks and perform real-time inference operations at scale.

Central Processing Units and System Coordination

While specialized AI accelerators handle intensive computational tasks, Central Processing Units still play important roles in AI deployment platforms. CPUs handle coordination, control, and preprocessing operations that complement specialized AI hardware.

Modern CPUs designed for AI applications include multiple cores with simultaneous multithreading capabilities. This enables efficient handling of data preprocessing, model management, and system coordination tasks.

Tensor Processing Units and Specialized AI Chips

Tensor Processing Units are processors designed specifically for machine learning workloads rather than adapted from graphics processing. These processors optimize the mathematical operations required for neural network training and inference, offering better performance and energy efficiency than general-purpose processors for AI applications.

TPUs include specialized circuit designs optimized for tensor operations – the mathematical structures that represent data and parameters within neural networks. This architecture enables exceptional performance for deep learning applications while using less power than equivalent GPU-based systems.

Storage Systems and Memory Architecture

Storage infrastructure within AI stacks handles the massive data volumes required for machine learning model training while providing fast access speeds necessary for efficient operations. AI stack components for storage typically implement tiered architectures combining high-speed solid-state drives for active training data with more economical storage solutions for long-term data retention.

These storage systems support specialized access patterns including random access for training data sampling and sequential access for batch processing operations.

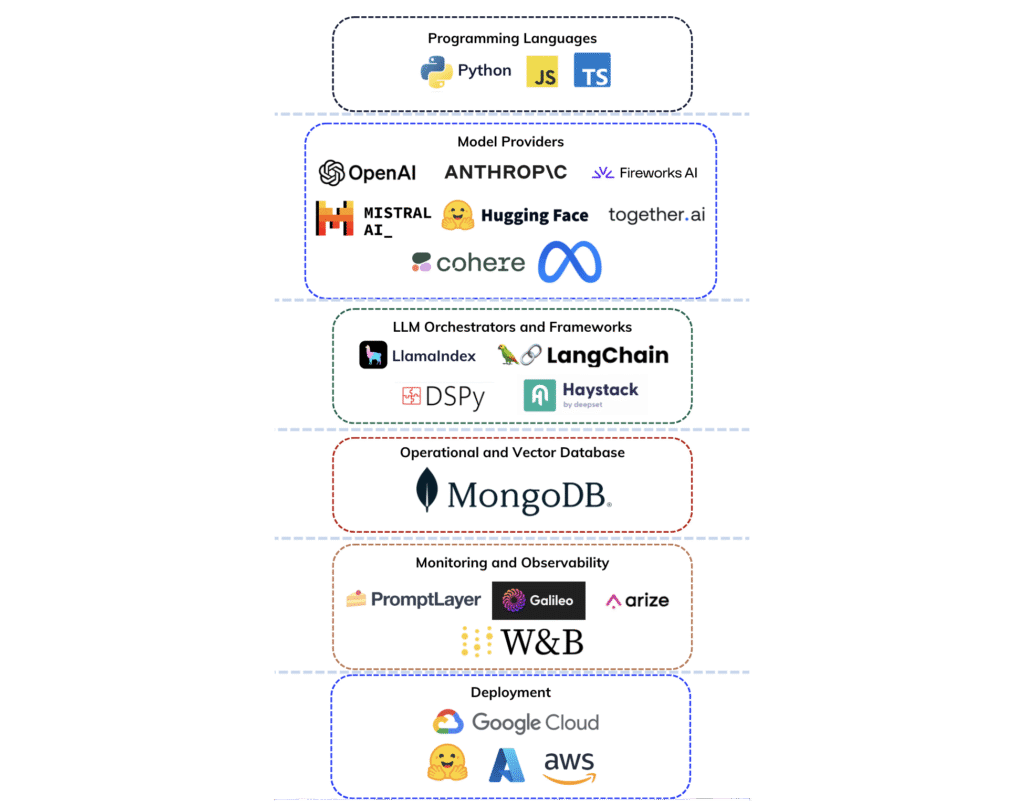

Essential AI Stack Software and Frameworks

The software component includes frameworks, libraries, and development platforms specifically designed to support machine learning and artificial intelligence application development.

Machine Learning Development Frameworks

AI development tools provide comprehensive libraries and tools for implementing complex algorithms and neural network architectures. Leading frameworks like TensorFlow and PyTorch have become industry standards, offering extensive libraries for different AI domains.

These frameworks abstract hardware complexity while providing developers with sophisticated capabilities for algorithm implementation and model development. Modern frameworks include high-level APIs for rapid prototyping alongside low-level interfaces for fine-grained control over model behavior.

Data Processing and Pipeline Tools

Data processing platforms provide capabilities for transforming raw data into formats suitable for machine learning algorithms. These tools handle diverse data types including:

- Structured databases – Traditional business data in tables and rows

- Unstructured content – Text documents, images, and multimedia files

- Streaming data – Real-time information from sensors, user interactions, and other live sources

Effective data processing platforms include automated pipeline frameworks for reliable data ingestion and transformation, feature store systems that manage engineered features for model training, and data quality monitoring systems.

Model Deployment and Orchestration Platforms

Orchestration and automation platforms manage complex AI workflows, from data preprocessing through model training to production deployment. These platforms include workflow management systems that coordinate multi-step AI processes and resource scheduling systems that optimize utilization of computational infrastructure.

Modern orchestration platforms also provide monitoring and alerting capabilities that ensure AI systems maintain performance and availability standards required for production environments.

Monitoring and Performance Management Solutions

Monitoring and performance management solutions provide visibility into AI system operation and performance across all stack components. These systems track key performance indicators including model accuracy, inference latency, resource utilization, and data quality metrics.

Effective monitoring solutions include automated alerting capabilities that notify operators of performance issues before they impact business operations.

Data Management Systems in AI Stacks

Data management represents one of the most complex aspects of AI governance frameworks, as machine learning algorithms depend entirely on high-quality, well-organized, and accessible data.

Data Storage Solutions for AI Applications

AI data storage systems handle massive datasets while providing the performance characteristics necessary for efficient machine learning operations. Traditional database systems often prove inadequate due to the volume, variety, and speed requirements of machine learning data.

Modern AI storage solutions implement distributed architectures that can scale horizontally across multiple servers. This provides both the capacity to store huge amounts of training data and the speed necessary to feed high-performance computational resources.

Specialized database technologies like vector databases have emerged to address unique requirements of modern AI applications, particularly for similarity searches and storing vector representations of data.

Data Processing Pipeline Architecture

Data processing pipelines transform raw data from diverse sources into clean, consistent, and feature-rich datasets suitable for machine learning algorithms. These pipelines handle complex transformation operations including:

- Data cleansing – Removing errors, duplicates, and inconsistencies

- Feature engineering – Creating relevant input variables for machine learning models

- Data augmentation – Increasing the effective size and diversity of training datasets

Modern data processing systems include automated quality monitoring that can detect when data changes in ways that might impact model performance.

Data Quality and Governance Management

Data governance systems provide capabilities for data lineage tracking (knowing where data came from and how it was processed), access control and security, and privacy protection measures. These systems also support audit trails and compliance reporting.

ML operations increasingly require comprehensive governance to meet regulatory requirements and internal policies governing AI development and deployment.

Real-time Processing vs Batch Processing Systems

The coordination between real-time and batch processing requires sophisticated scheduling and resource management systems. Batch processing systems optimize for handling large volumes of historical data cost-effectively, while real-time processing systems prioritize fast responses for applications that need immediate answers.

AI Stack Security and Governance Framework

Security and governance frameworks ensure artificial intelligence systems operate safely, ethically, and in compliance with regulatory requirements.

Security Requirements for AI Infrastructure

Security considerations within AI stacks extend beyond traditional information security to address unique vulnerabilities specific to artificial intelligence systems. These security challenges include:

- Adversarial attacks – Attempts to manipulate AI model behavior through specially crafted inputs

- Data poisoning attacks – Corrupting training datasets to influence model performance

- Model extraction attacks – Attempting to steal proprietary algorithms and intellectual property

AI security frameworks implement specialized monitoring and detection systems that can identify AI-specific threats in real-time operational environments.

Compliance and Regulatory Considerations

Data privacy and protection represent critical aspects of AI security frameworks, as machine learning systems typically require access to large volumes of potentially sensitive information. Privacy protection strategies address data minimization principles, anonymization techniques, and access control systems.

Industry-specific compliance requirements add complexity for AI implementations in regulated sectors like healthcare, financial services, and transportation. These requirements often require specialized AI stack components and governance procedures.

Risk Management and Audit Strategies

Model governance and lifecycle management provide capabilities for ensuring AI systems remain accurate, fair, and aligned with organizational objectives throughout their operational lifetime. Effective model governance includes:

- Version control systems – Tracking changes to models and enabling rollback when necessary

- Performance monitoring – Detecting degradation in accuracy metrics

- Automated testing frameworks – Validating model behavior against established criteria

The governance framework also addresses model interpretability and explainability requirements, providing stakeholders with understanding of AI decision-making processes.

Privacy Protection and Data Security Measures

Comprehensive privacy protection strategies integrate throughout the AI pipeline, from initial data collection through model training to final application deployment. This includes implementing privacy-preserving machine learning techniques, secure computation methods, and approaches that protect individual privacy while preserving data utility for AI applications.

How to Implement an AI Stack for Your Organization

Successful AI stack implementation requires strategic planning that addresses technical, organizational, and operational considerations while aligning with business objectives.

Infrastructure Assessment and Requirements Planning

Organizations conduct detailed analysis of computational requirements including processing power needs, storage capacity and performance requirements, and networking bandwidth requirements for distributed AI operations. This assessment also evaluates existing staff capabilities and identifies training or hiring needs.

The planning process includes evaluation of current systems and identification of integration requirements, ensuring that new AI infrastructure can work effectively with existing enterprise systems and processes.

AI Stack Architecture Design and Component Selection

Architecture design balances multiple objectives including performance optimization, cost management, scalability requirements, and integration with existing enterprise systems. This involves selecting appropriate hardware platforms based on specific AI workload characteristics and choosing software frameworks that align with organizational capabilities.

The design process addresses deployment models including on-premises, cloud-based, and hybrid approaches, evaluating trade-offs between control, cost, and operational complexity.

Deployment Strategy and Integration Planning

Component integration and testing phases require systematic validation of AI stack functionality across all architectural layers. This process typically involves establishing development and testing environments that mirror production configurations and implementing automated testing frameworks.

The integration process includes validation of individual component functionality, integration testing across component boundaries, and performance testing under realistic workload conditions.

Testing, Validation, and Performance Optimization

Testing includes security testing to identify and address potential vulnerabilities alongside functional and performance validation. The testing framework provides comprehensive coverage of all system components while ensuring the integrated system meets performance and reliability requirements.

Performance optimization addresses both individual component performance and system-wide efficiency, ensuring the AI stack can meet business requirements while operating cost-effectively.

Ongoing Monitoring and Continuous Improvement

Deployment and operational considerations require careful planning of rollout strategies, change management processes, and ongoing operational procedures. Successful deployment strategies typically involve phased rollouts that minimize risk while providing opportunities to validate system performance.

Ongoing monitoring and optimization represent continuous responsibilities that ensure AI stacks continue to meet performance and business objectives as workloads evolve and scale over time.

AI Stack Implementation Best Practices

Strategic recommendations for successful AI stack deployment focus on common challenges and proven solutions that enable organizations to maximize return on AI infrastructure investments.

Performance Optimization Strategies

Performance optimization techniques focus on maximizing AI system efficiency while balancing speed, accuracy, and resource utilization. This includes implementing performance monitoring systems that track key metrics across all AI stack components and establishing capacity planning processes.

Optimization strategies address both technical performance metrics like computational utilization and response times, as well as business performance indicators like model accuracy and user satisfaction measures.

Cost Management and Resource Allocation

Cost management approaches focus on controlling expenses while maintaining AI capabilities, optimizing hardware and software investments to deliver maximum business value. This includes implementing resource allocation strategies that ensure efficient utilization of expensive computational resources.

Organizations establish cost monitoring and allocation systems that provide visibility into AI infrastructure expenses and enable optimization of resource usage based on business priorities.

Scalability Planning for Growing AI Demands

Scalability planning involves designing infrastructure that can expand with business needs while avoiding bottlenecks as AI usage increases. This requires careful architecture design that anticipates future growth requirements and incorporates flexible deployment models.

Scalability strategies address both technical scaling capabilities and organizational scaling requirements, ensuring that teams and processes can adapt to increased AI adoption and more complex use cases.

Team Structure and Skill Development Requirements

Building organizational capabilities for AI stack management requires identifying necessary expertise and training needs while establishing team structures that can effectively manage complex AI infrastructure. This includes developing training programs that enable existing staff to acquire AI-specific skills.

Organizations establish clear roles and responsibilities for AI stack management while ensuring adequate technical expertise across all critical system components.

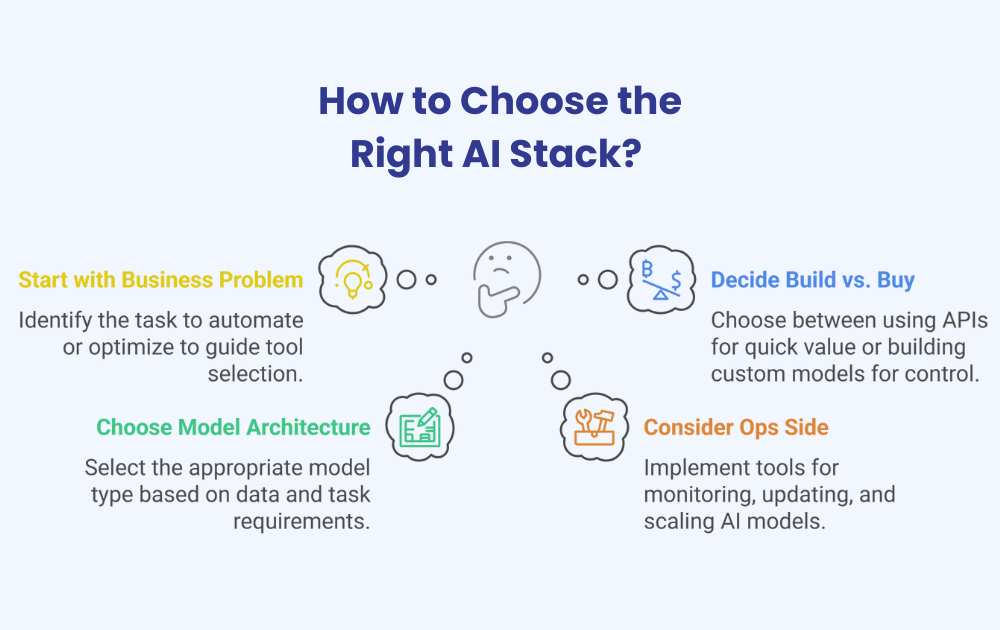

Choosing the Right AI Stack for Your Business Needs

The decision framework for selecting appropriate AI stack components involves systematic evaluation of multiple factors that influence long-term success and return on investment.

Business Requirements and Use Case Assessment

Aligning AI stack capabilities with specific business objectives requires thorough understanding of what the organization wants to achieve with AI technology. This assessment identifies specific use cases, performance requirements, and integration needs that influence technology selection decisions.

The requirements assessment also considers future business needs and growth projections to ensure AI stack investments can support evolving organizational requirements.

Technology Evaluation and Selection Criteria

Key factors for comparing different AI stack options include:

- Performance capabilities – Can the technology handle your workload requirements?

- Compatibility – How well does it integrate with existing systems?

- Vendor support quality – What level of assistance and documentation is available?

- Future-proofing considerations – Will this technology remain viable as requirements evolve?

The evaluation process includes technical assessments, proof-of-concept implementations, and detailed cost-benefit analysis.

Vendor Analysis and Integration Considerations

Evaluating suppliers requires assessment of technical capabilities, support quality, financial stability, and strategic alignment with organizational objectives. The analysis also evaluates integration requirements and compatibility with existing technology investments.

Vendor selection considers both technical factors and business relationship factors that influence long-term success and satisfaction with AI stack investments.

Total Cost of Ownership and ROI Analysis

Understanding the complete financial impact of AI stack implementation requires analysis of all costs including initial investment, ongoing operational expenses, and indirect costs like training and support. ROI analysis focuses on specific business benefits and measurable improvements in operational efficiency or business outcomes.

The financial analysis considers both quantitative benefits like cost savings and productivity improvements, as well as qualitative benefits like improved decision-making capabilities and competitive advantages.

Building Your AI Future with Strategic Infrastructure Planning

The implementation of comprehensive AI stack architecture represents a strategic investment that positions organizations to capitalize on the transformative potential of artificial intelligence while ensuring sustainable, scalable, and secure AI operations.

Success in AI stack implementation requires careful balance of technical capabilities, organizational readiness, and strategic alignment with business objectives. Organizations that invest in building robust, flexible, and well-governed AI stack architectures today will be best positioned to capitalize on future technological advances while maintaining competitive advantages in an increasingly AI-driven business environment.

The complexity and strategic importance of AI stack implementation often benefit from expert guidance and specialized knowledge. Professional AI consulting services can provide valuable assistance in architecture design, technology selection, and implementation planning, helping organizations avoid common pitfalls while accelerating time-to-value for AI investments.